|

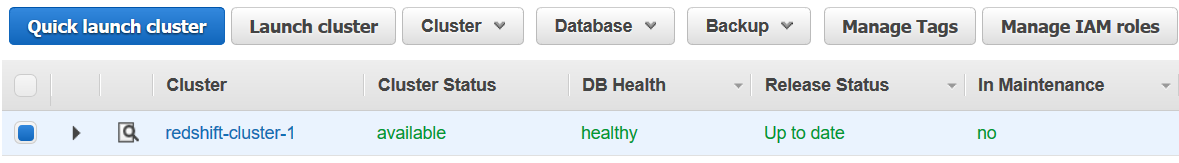

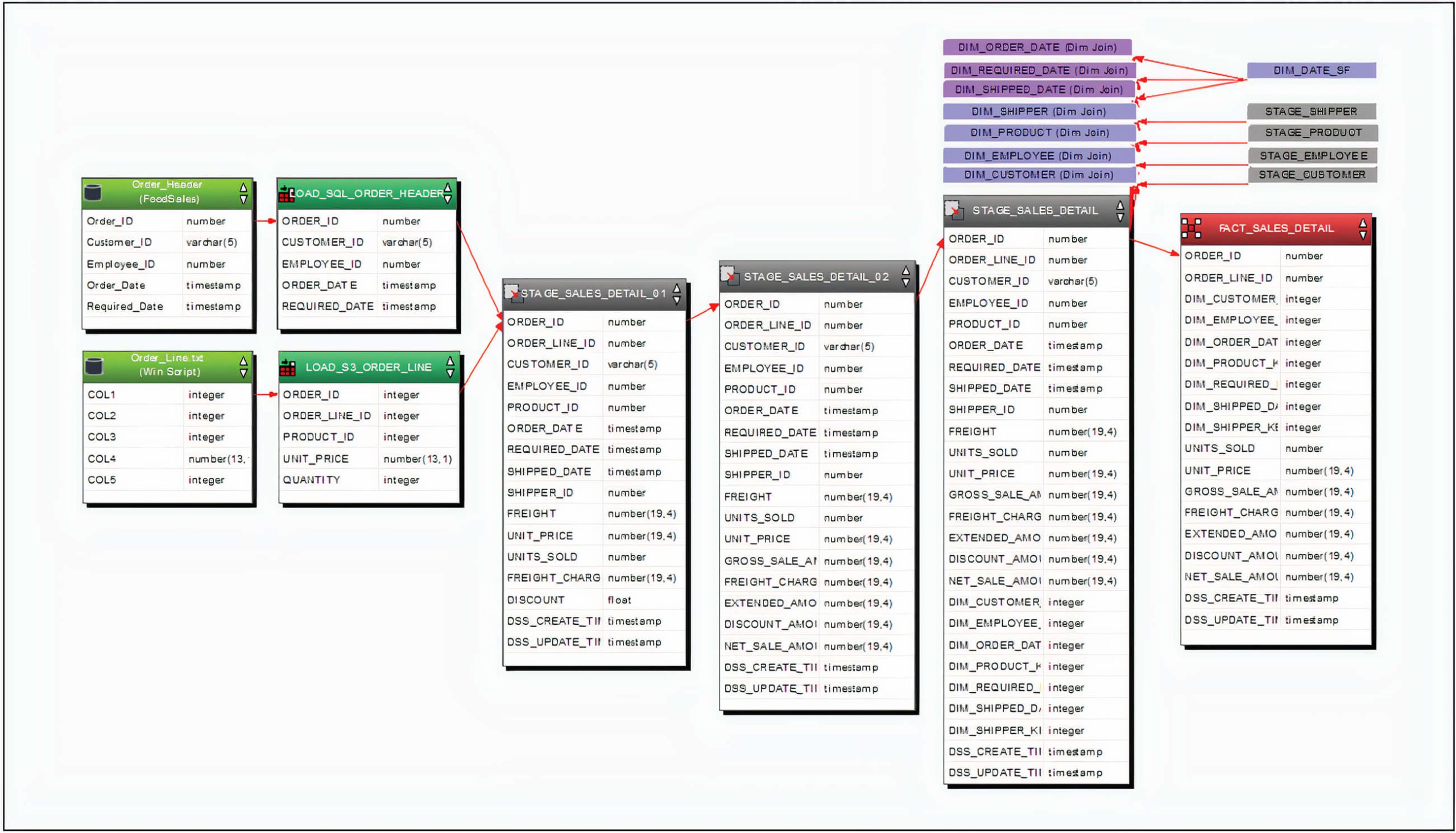

8/6/2023 0 Comments Amazon redshift sqlOk, now that we understood a bit about the Redshift Cluster let's go back to the main topic, Redshift ML :)Īnd don't worry if things are still dry for you, as soon as we jump into the demo and create a cluster from scratch, things will fall in place. The new ra3 nodes let you determine how much compute capacity you need to support your workload and then scale the amount of storage based on your needs. Amazon Redshift offers different node types to accommodate different types of workloads, so you can select which suits you the best, but its is recommended to use ra3. The node type determines the CPU, RAM, storage capacity, and storage drive type for each node. Each compute node has its own dedicated CPU, memory, and attached storage, which are determined by the node type. After that, the compute node(s) execute the respective compiled code and send intermediate results back to the leader node for final aggregation.

The leader node compiles code for individual elements of the execution plan and assigns the code to individual compute node(s). The compute nodes is the main workhorse for the Redshift cluster, and it sits behind the leader node. Few of the major tasks of the leader node is to store the metadata, coordinate with all the compute nodes for parallel SQL processing and and to generate most optimized and efficient query plan. In other words, the leader node behaves as the gateway(the SQL endpoint) of your cluster for all the clients. Once the cluster is created, the client application interacts directly only with the leader node. We don't have to define a leader node, it will be automatically provisioned with every Redshift cluster.

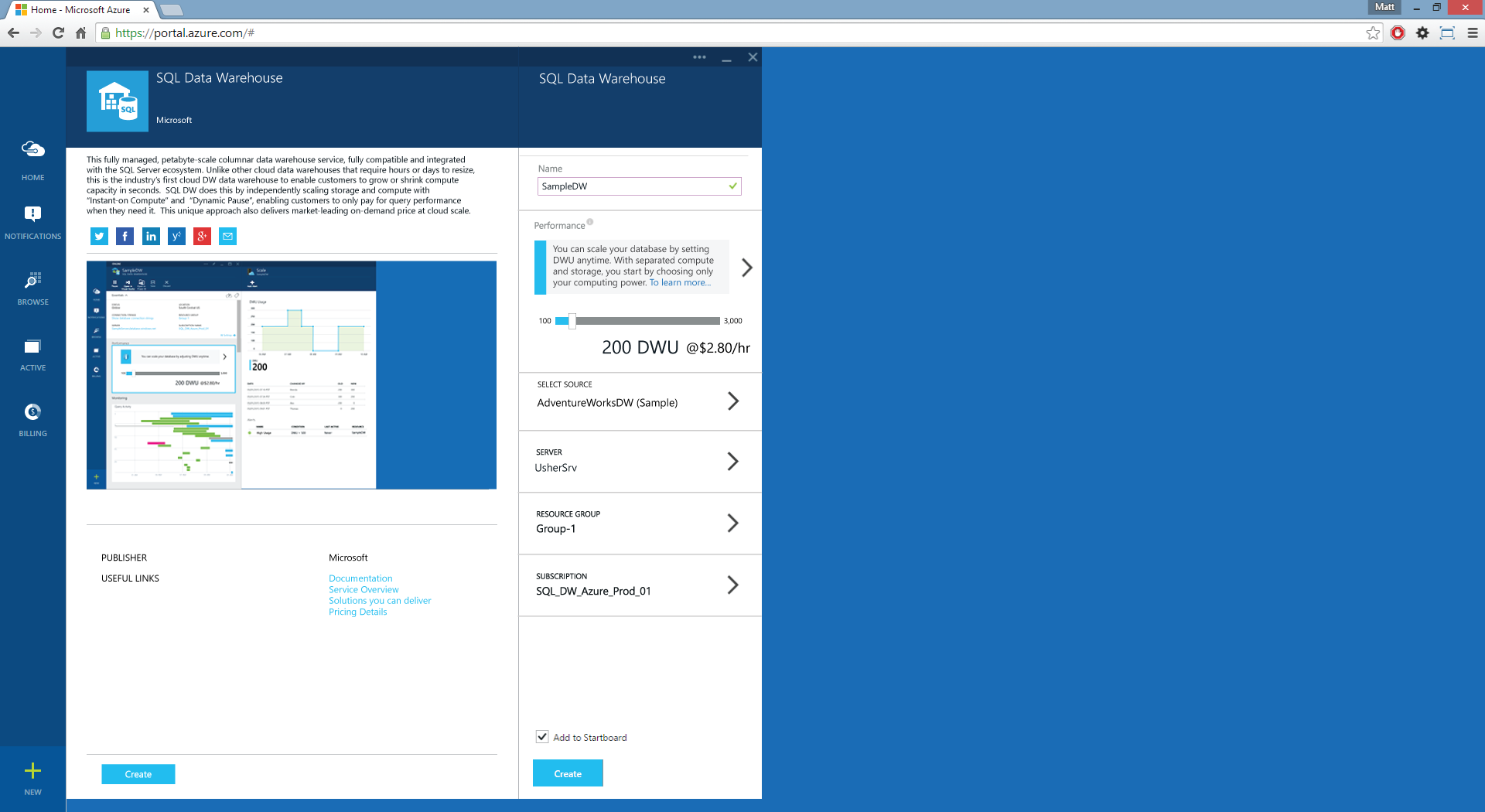

of compute nodes, then an additional leader node coordinates the compute nodes and handles external communication. If we create a cluster with two or more no. A cluster comprises of nodes, as shown in the above image, Redshift has two major node types: leader node and compute node. A cluster is composed of one or more compute nodes. The core infrastructure component of an Amazon Redshift data warehouse is a cluster. Having said that, you may like to use any other SQL Client tool like SQL Workbench/J, psql tool, etc. As Amazon Redshift is based on industry-standard PostgreSQL, most of commonly used SQL client application should work, we are going to use Jetbrains DataGrip to connect to our Redshift cluster( via JDBC connection) later while we jump into the hands-on section. Let's quickly go over few core components of an Amazon Redshift Cluster:Īmazon Redshift integrates with various data loading and ETL ( extract, transform, and load) tools and business intelligence (BI) reporting, data mining, and analytics tools. It uses massively parallel processing(MPP), columnar storage and data compression encoding schemes to reduce the amount of I/O needed to perform queries, which allows it in distributing the SQL operations to take advantage of all available resources underneath. It uses a variety of innovations to obtain very high query performance on datasets ranging in size from a hundred gigabytes to a petabyte or more. Its low-cost and highly scalable service, which allows you to get started on your data warehouse use-cases at a minimal cost and scale as the demand for your data grows. Overall, we will try to solve different problems which will help us to understand Amazon Redshift ML from a perspective of a database administrator, data analyst and an advanced machine learning expert.īefore we get started and set the stage by reviewing what is Amazon Redshift?Īmazon Redshift is a fully managed, petabyte-scale data warehousing service on the AWS. I am a Data Scientist - How can I make use of this ?.I am a Data Analyst - What's about me ?.I am a Database Administrator - What's in for me ?Īnd in the Part-2, we will take that learning beyond and cover the following:.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed